Effective fraud detection and financial control initiatives leverage advanced analytics and machine learning techniques to derive valuable and actionable information for managers. The reliability of the results produced by these data intensive techniques is DIRECTLY dependent on the quality and quantity of data available for analysis. Today’s enterprises churn out humongous volumes of data (“big data”) during the normal course of business… unfortunately, most of this data cannot be used for advanced analysis it its raw form. IBM estimates that US enterprises lose as much as $3.1 Trillion every year due to poor data quality!

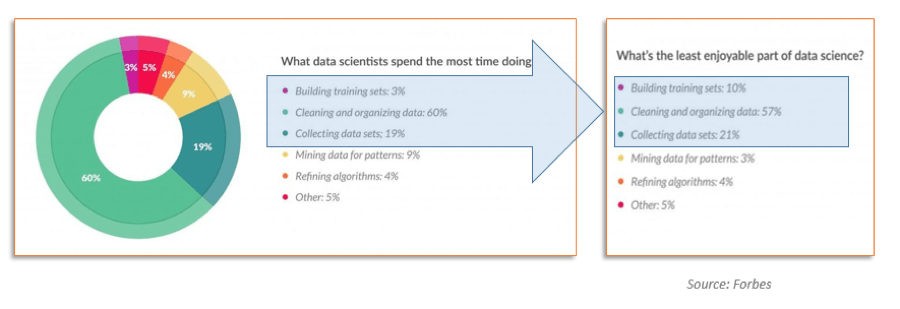

The task of acquiring, cleansing, shaping and bending of the raw operational data for analytics or other business purposes is known as DATA PREPARATION. According to Forbes magazine, data scientists spend 80% of their time in preparing data and only 20% of their time actually doing analytics!

So, what exactly is involved in “preparing” the data?

Although exact process details may vary for different enterprises, all Data Preparation processes can be decomposed into five steps that are briefly discussed in the following sections.

1. Identify

During the Identify step you have to find the data which is best-suited for your specific analytical purpose. Data scientists cite this as a frustrating and time-consuming exercise. A crucial requirement for identifying the needed data is the metadata repository – that is, the creation and maintenance of a comprehensive, well-documented data catalog that contains critical information about the underlying data. In addition to data profiling statistics and other contents, the metadata repository provides a descriptive index that points to the location of available data. The available data is then cataloged, together with data profiling statistics and other contents.

Data identification is not just about finding the data and its source, but also about making it easier to be discovered later, whenever a need arises. The metadata repository must be continuously updated as the enterprise data changes or when new data sources are identified, even if there are no immediate requirement for the data. It is all about having the data available when needed.

2. Confine

During the Confine step, the data selected during previous step is extracted and stored in a new temporary repository where it can be analyzed without affecting regular operations of the enterprise. The term “confine” conjures up the image of temporarily imprisoning a copy of the data that feeds the rest of the Data Preparation process. For this step, a temporary staging area or workspace is required for the processing that happens in the next step of Data Preparation, which is described below. When ongoing quarantine of transitional or delivered data is required, it should make use of shared and managed storage. An evolving practice here comprises the use of in-memory storage areas or cloud-based storage for much faster real-time shaping of the data before it’s sent on to other processes.

3. Refine

During the Refine step, the identified data that has been extracted and confined in the previous step is examined for quality and then cleansed and transformed as may be required, in order to support its future usage for analysis and inquiry. During this step, you must determine how appropriate the data is for its intended purpose or use. As a first step in the data refinement process, data quality rules are applied while integrating multiple data sources into a single data model, and every effort is made to eliminate bad data.

The data is also transformed during this step, if needed, in order to make it easier to use during analysis. For example, individual sales transactions may be aggregated into daily, weekly, weekend and monthly sub-totals.

The data may also be augmented for ad hoc querying, standard reporting and advanced analytics, and then moved to its permanent repository which may be an enterprise data warehouse or data lake or other similar repository. The point is: Don’t reinvent the wheel, reuse what’s around. The more reusable your refining processes are, the less reliant business is on IT for custom build processes and ad hoc requests. Ideally, the enterprise should strive for making data quality components a library of functions and repository of rules that can be reused to cleanse data.

4. Document

Documentation is an important and often ignored step in the Data Preparation process. In order to truly make the data available to everyone who may need it, it is critical to record both business and technical metadata about the identified, confined and refined data to help potential users of the data to understand what data is available to them for analysis. This includes:

- Nominal definitions

- Business terminology

- Source data lineage

- History of changes applied during distillation

- Relationships with other data

- Data usage recommendations

- Associated data governance policies.

All the metadata is shared using the metadata repository, or data catalog. Shared metadata enables faster data preparation, that is consistent when repeated, and also eases collaboration when multiple users are involved in different steps of data preparation.

5. Release

The final Release step is about structuring the refined data into the format needed by the consuming process or user. The delivered data set(s) should also be evaluated for persistent confinement and, if confined, the supporting metadata should be added to the data catalog.

These steps allow the data to be discovered by other users. Delivery must also stand by data governance policies, such as those minimizing the exposure of sensitive information.

You must make Data Preparation a repeatable process which is formalized as an enterprise “best practice.” Users having access to shared metadata, continuously managed storage, and reusable cleaning logic will make data preparation a reliable and reusable process. In turn, the users will be empowered with clean data and they can focus on the more important task of analyzing the data to gain actionable insights. This helps in conditioning better data readiness for the enterprise.

Shared metadata, persistent managed storage, and reusable transformation and cleansing logic will make Data Preparation an efficient, consistent and repeatable process which users can rely on for more effective financial control, fraud detection efforts and other analytical needs.